|

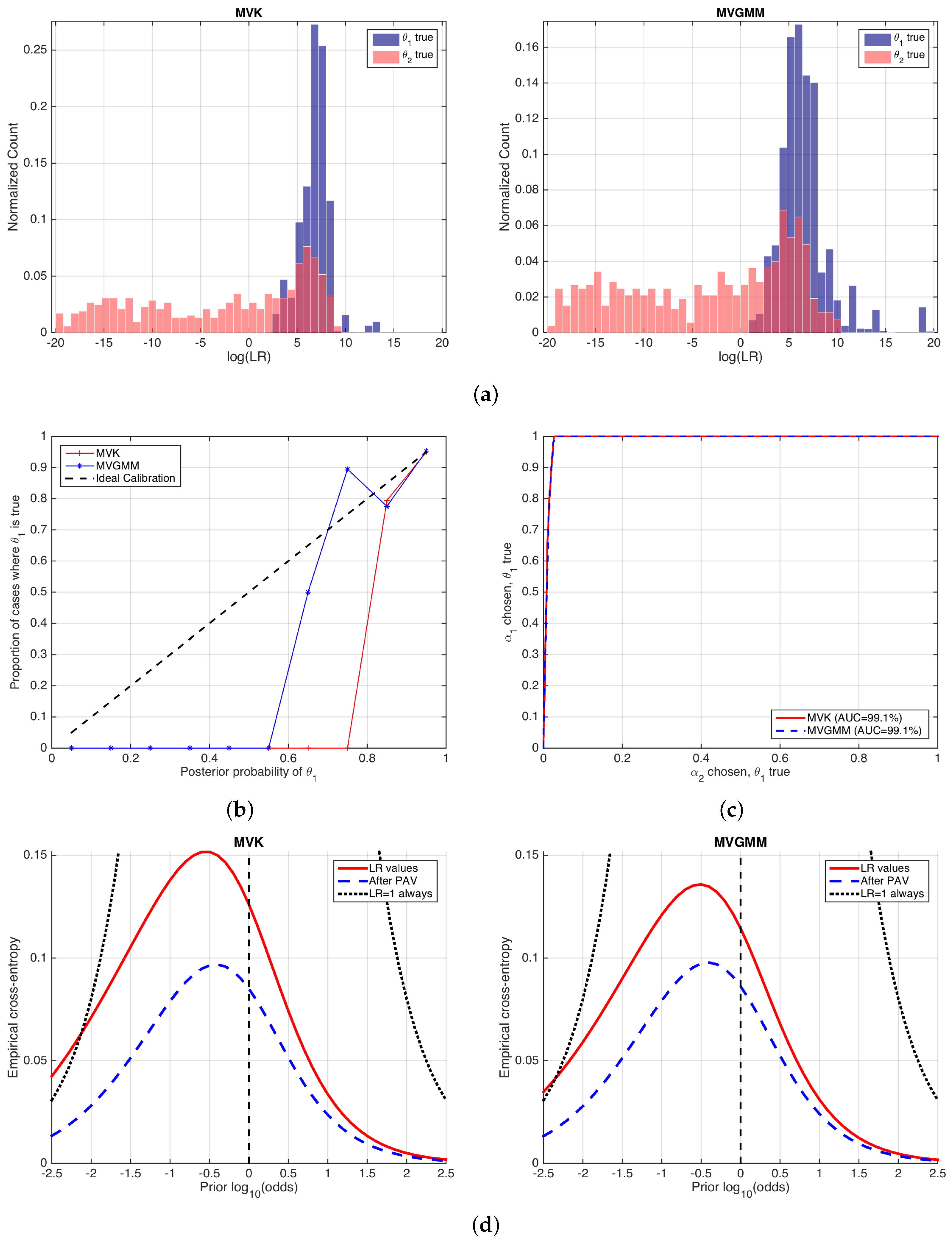

9/22/2023 0 Comments Binary cross entropyIntuitively cross entropy says the following, if I have a bunch of events and a bunch of probabilities, how likely is that those events happen taking into account those probabilities? If it is likely, then cross-entropy will be small, otherwise, it will be big.If $y_i$ is 1 the second term of the sum is 0, likewise, if $y_i$ is 0 then the first term goes away.As log (0) is -, we add a small offset, and start with 0.001 as the smallest value in the interval. I have found several tutorials for convolutional autoencoders that use as the loss function. Run the following code snippet to plot the values of log (x) and -log (x) in the range 0 to 1. 11 After using TensorFlow for quite a while I have read some Keras tutorials and implemented some examples. $$g(x|p)=p^^m y_i ln(p_i) + (1-y_i) log (1-p_i) Cross-Entropy Loss for Binary Classification Let’s start this section by reviewing the log function in the interval (0,1. Essentially it can be boiled down to the negative log of the probability associated with your true class label.

Cross entropy loss function definition between two probability distributions $p$ and $q$ is:įrom my knowledge again, If we are expecting binary outcome from our function, it would be optimal to perform cross entropy loss calculation on Bernoulli random variables.īy definition probability mass function $g$ of Bernoulli distribution, over possible outcome $x$ is: Binary cross-entropy is a simplification of the cross-entropy loss function applied to cases where there are only two output classes.

In order to find optimal weights for classification purposes, relatively minimizable error function must be found, this can be cross entropy.įrom my knowledge, cross entropy measures quantification between two probability distributions by bit difference between set of same events belonging to two probability distributions.įor some reason, cross entropy is equivalent to negative log likelihood. Let's say I'm trying to classify some data with logistic regression.īefore passing the summed data to the logistic function (normalized in range $$), weights must be optimized for desirable outcome. Adding to the above posts, the simplest form of cross-entropy loss is known as binary-cross-entropy (used as loss function for binary classification, e.g., with logistic regression), whereas the generalized version is categorical-cross-entropy (used as loss function for multi-class classification problems, e.g., with neural networks). Binary cross-entropy and logistic regression Ever wondered why we use it, where it comes from and how to optimize it efficiently Here is one explanation (code included).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed